Lessons from Instacart and Snowflake: Why We Need a Paradigm Shift

In the LLM and GenAI era, we still optimize databases the same way: manual, expensive, one query at a time.

When reports surfaced about Instacart’s Snowflake spend, the news caught my interest. Analysts debated whether Instacart’s savings came from optimization, pre-purchased credits, or platform changes.

Snowflake highlighted a presentation from Instacart titled “How Instacart Optimized Snowflake Costs by 50%.” I attended the Summit and rewatched the presentation. This presentation highlights three key lessons for the database community.

I’m not criticizing Instacart. In fact, I appreciate how much work goes into optimizing database workloads at large companies. As a computer science professor and database researcher, I have spent years optimizing complex query workloads. A better question is whether we should still optimize workloads one query at a time in an AI-driven world. Is this approach scalable for other organizations?

Instacart’s Three-Step Process For Snowflake Optimization

If you take the time to watch the Instacart video, you will see that they follow a three-step process for Snowflake optimization.

Step 1: Find The Most Expensive Queries

Instacart’s data team built a company-wide infrastructure. They use a summary view that feeds into dashboards. They identify expensive queries and notify the Directly Responsible Individual (DRI) who wrote each query.

Step 2: Manually Optimize Queries

The DRI must analyze and optimize the query manually. They emphasize pushing filters down. This works, but complex queries make it difficult to apply consistently. Instead of a central team, the company spreads this work across hundreds of individuals who wrote queries. This creates accountability but introduces significant opportunity cost across the organization.

Step 3: Find The Right Size For The Warehouse

In addition to query optimization, the team matches each query to an appropriate warehouse size. Too big and you are needlessly spending money. Too small and the query will run too slowly. How do they pick the right size? They run experiments across warehouse sizes and compare cost and performance.

This is admittedly a very brief summary of their methodology. I encourage you to watch their presentation to get the full effect. At least six other customers presented similar optimization and cost-saving approaches at Snowflake Summit.

Four Lessons We Can Learn From Instacart

I know this optimization methodology doesn’t only happen at Instacart. Teams at companies like Uber and Microsoft follow similar methods to optimize query workloads and reduce infrastructure costs. Our community still optimizes one query at a time, even with cloud data warehouses. So here are the lessons I think we can all take away from the Instacart story.

Lesson 1: Everyone’s Ignoring The Long Tail

Instacart’s approach focuses on identifying the most expensive queries. This approach highlights an important limitation: even large teams cannot analyze all queries. Even with this investment, they focus only on a small fraction of expensive queries. For example, if you inspect 1,000 queries, you prioritize the slowest and most frequent ones. In large systems, this ignores many smaller queries that still drive significant cost. In other words, the 80-20 rule doesn’t always work when it comes to database workloads: there is often a long-tailed distribution of faster queries that because of their large numbers still count for a large portion of your overall bill. Imagine what the potential could be if we could analyze all the queries and optimize all of them, not just a tiny fraction of the most expensive ones.

Lesson 2: Asking More People To Do Manual Work Doesn’t Make It Less Manual (Or Less Expensive)

Even when Instacart flags expensive queries, there’s no silver bullet. They rely on the DRI to fix them. With hundreds of users writing queries, optimization is effectively crowdsourced. While this promotes accountability, the data team mainly enables it through tools like tagging, query profiles, and performance insights so teams can debug their own queries.

But the question is whether this is a wise use of the company’s resources, for a number of reasons:

- Not every user should be responsible for query optimization. Is it wise to ask a ML expert or a supply chain guru or a marketing operations expert to spend their time writing perfect queries, or even worse, optimizing the queries they wrote months ago? Weren’t these individuals supposedly recruited for a different skill set to perform a different business function for their company?

- Distributing manual work does not reduce effort. It spreads it across more people. You are still spending a lot of time on hand-tweaking SQL queries, one query and one person at a time. That’s a lot of money. In some ways this is even worse because now you cannot even track how much of the organization’s resources are spent on this manual work. It’s all hidden and distributed.

In some ways, the company is still paying the same tax for the manual optimizations, but more people are being taxed and some individuals spend more time on work outside their expertise. Are there other activities for these individuals that will be more beneficial for Instacart as a business?

Lesson 3: “Stare At Your Query” Is Still Rampant In Our Industry

A lot of infrastructure seems to be geared towards 1) highlighting the expensive and frequently running queries that need to be examined by humans, and 2) a user friendly way of seeing the query plan (the query profile in the case of Snowflake). But then all that’s doing essentially is revealing an expensive query and the expensive operator in that query. Then the DRI must examine it long enough to see what can be done to speed it up. The only advice I kept hearing was look for opportunities to push down a filter. Real-world queries are often complex and difficult to optimize manually. In many cases you cannot even see your entire query on the screen without having to scroll through multiple pages. Likewise, what if the query profile is simply showing you are scanning several terabytes of data? How do you expect your end user to solve that?

We need better solutions than this. As a data community, we need to provide better tooling and more specific advice to our end users if we’re expecting them to “solve” our cost and performance problems. I’d even go further and argue that in many cases it’s not even possible to optimize your workload one query at a time. In many cases, you need to identify the common blocks of computation across many queries and find a way to reuse that computation (at the risk of sounding self-promotional, that’s one of the things our query acceleration product does).

Lesson 4: “Trial-And-Error” Is The Modern Way Of Saying “We Don’t Know”

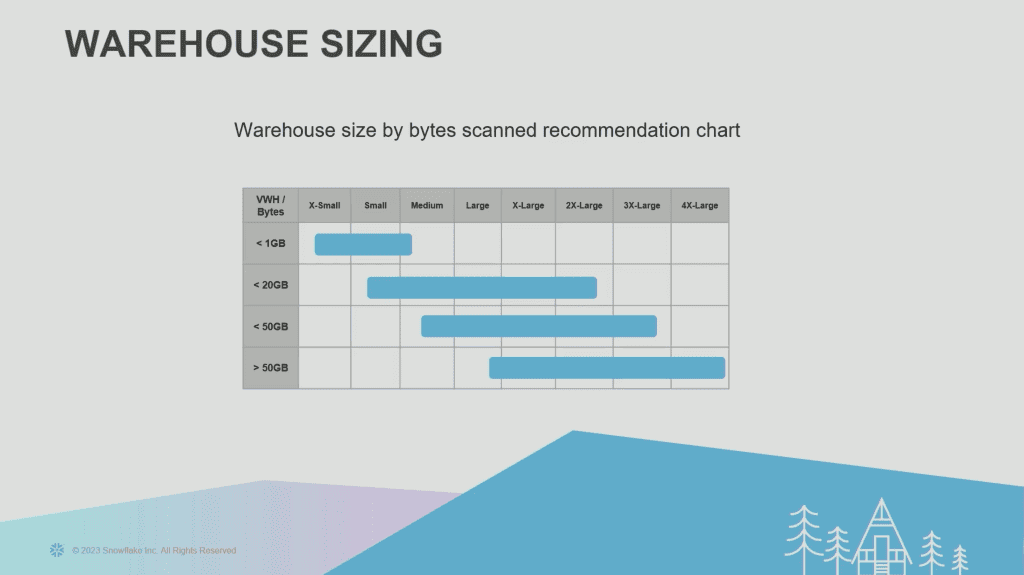

One of the most common ways of optimizing Snowflake or cloud warehouses in general, is rightsizing your warehouses. The problem is that even Snowflake’s official advice is to choose the optimal size for your warehouse through trial-and-error. Their advice, which Instacart follows, is to track bytes scanned for each query and then choose the appropriate size for your warehouse. Say your query scans less than 20GB. According to Snowflake’s own advice, as shown in this illustration from Instacart’s presentation, the optimal warehouse size for you might be small, medium, large, x-large, or even 2x-large. But which one? Well, you won’t know until you try and try again.

No rule of thumb is precise, but even as a rule of thumb this is comically unhelpful. Imagine how many hours of experimentation will be required to find the right size. Even if you manage to get it right, there’s no guarantee that it will work tomorrow or a week later when the workload changes. There is also no guarantee that the size that’s optimal at 9pm will also be optimal during 9am peak traffic. So what should we do? Well, your poor engineers have to keep repeating this tedious and error-prone process over and over again.

A Better Way: AI With Data Learning

It’s incredible that in the LLM and GenAI era, we’re still approaching our database problems the same way we have been for the last 40 years: manual, expensive, one-query-at-a-time process.

Instacart’s approach requires significant engineering effort across teams. At roughly $250K per FTE, that’s about a $5M first-year investment. Some costs, like tooling, can be amortized, but query optimization remains a recurring expense as new queries and data emerge. It may be worth it if it saves $10M annually, but many organizations can’t afford this—or may prefer to invest those resources elsewhere.

Use automation for tasks that add little value or require repetitive manual effort. Researchers have developed techniques that automate query and workload optimization. Recent research has introduced techniques for automated query optimization. Keebo brings academic research into production to reduce reliance on manual optimization. For example, you can now leverage a specific form of AI, which we call Data Learning, at Keebo to rewrite your slow queries on the fly with correctness guarantees. Similarly, many Snowflake customers have already started leveraging Data Learning, to automatically optimize their warehouses using automated Warehouse Optimization. If you want to explore further, review the research or see the product in action. But if you don’t have time to read these technical papers, you can also just watch or book a live demo and then decide if you still want to spend your precious engineering cycles on manual optimizations.